image based on a pencil drawing made by Steven Bishop, inspired by an image from the 1971 book “Be Here Now” by Ram Dass (Richard Alpert)

In a recent class dialogue with Dr. Jovian Radheshwar’s INST 1100 students, we explored ideas from political scientist Dr. José Marichal, author of You Must Become an Algorithmic Problem: Renegotiating the Sociotechnical Contract. His work examines how our lives have become entwined with algorithms — systems that categorize, predict, and shape our digital experiences.

Here’s a simple way to think about the difference between social media and algorithms, which came up in the class discussion:, drawing on Jose Marichal’s explanation:

- Social media is the visible layer — the apps and platforms where we post, share, and connect. It’s where we perform our identities and find community.

- Algorithms are the invisible layer — the systems behind those platforms that decide what we see, when we see it, and who sees us. They quietly shape our feeds, recommendations, and even our sense of what’s “normal.”

Social media is the stage. Algorithms are the directors. Marichal’s key point is that we often blame the stage, but forget about the directors quietly shaping the show.

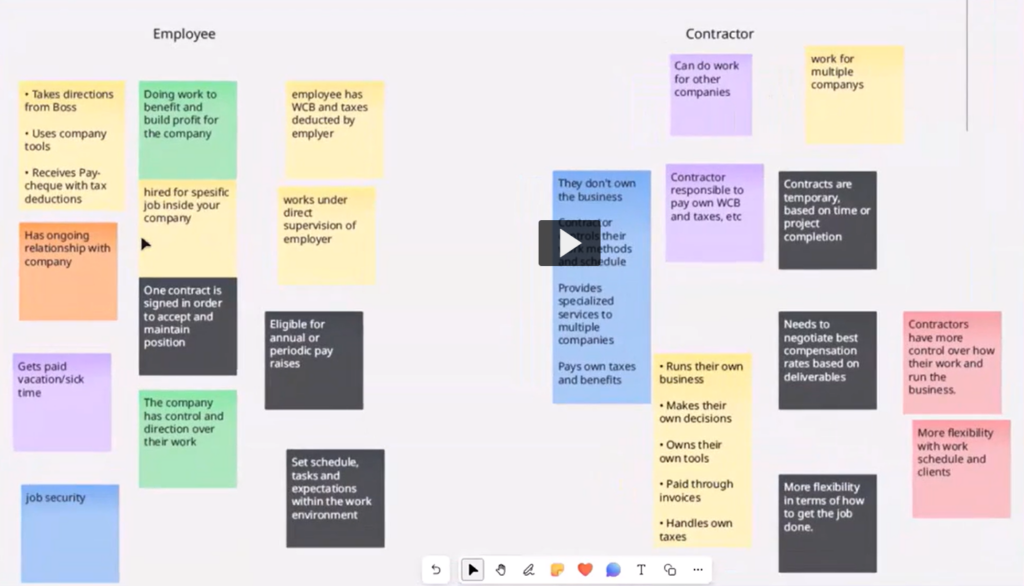

Dr. Marichal draws a parallel between the social contract — the implicit agreement we make with society to exchange certain freedoms for collective security — and what he calls the sociotechnical contract. In this new digital era, we have unwittingly traded our attention, privacy, and even aspects of independent thought for the conveniences of social media, algorithmic news feeds, and digital companionship.

The Attention Economy and the Outlier

Students were quick to recognize how easily social media can shape perception. One noted how their feed filled with political praise for one U.S. leader without presenting opposing views. Another shared how TikTok’s algorithms “trained” themselves to reflect heartbreak or attraction—depending on what held their attention longest.

Dr. Marichal’s warning is that algorithms thrive on generalization. They group us into categories that make us easier to sell to, sway politically, or polarize socially. The “outliers” — those who don’t fit the model — are vital to creativity, democracy, and human progress. As one student insightfully said, “You have to train your social media algorithm to what you actually want.”

Slowing Down as Resistance

We considered whether slowing down could itself be a political act. What if, in a world obsessed with speed, scrolling, and optimization, we simply paused?

One student responded:

If we just stopped using Facebook or Instagram, we could make the founders go bankrupt. Our attention is what they want — and we have the power to take it back.

Another reflected:

I think slowing down makes sense as a political act because if you can slow down, you can kind of rebel against capitalism. Like our time and our energy and our bodies are the currency that capitalism operates off of.

We discussed “attention” as the new frontier of civic power — something both tech companies and social movements compete for. Even mindfulness, once a personal wellness practice, becomes a political gesture when it interrupts the algorithm’s grip.

Mindfulness, Attention, and the Self

We discussed how Mindfulness, can help shift us from a “survivalist mind”—constantly reacting to fear, news, and comparison—to an “attentionist mind”, capable of calm observation.

We even tried a simple exercise: lightly touching our fingertips together and noticing the subtle ridges of sensation. For a moment, attention was no longer a commodity — it was presence.

Another student mentioned that yoga, practiced at home by his parents, can reclaim this awareness:

to be at ease with yourself, at ease with your mind, at ease with, no matter what position you’re in, you’re still calm and composed and collected

Social Media for Connection

Not all stories were cautionary. One student described joining a run club he discovered through social media — a digital doorway to genuine human connection:

“Through that I met people I’d never otherwise meet. It’s social media being used in a positive way.”

Another student shared how social media fueled nationwide protests in Nepal by raising awareness of corruption:

“The government had to shut down the Internet to stop people from organizing. That shows the power of the right algorithm.”

From Kafka to the Classroom

The conversation eventually turned to Franz Kafka’s The Trial. Like Kafka’s protagonist Joseph K., who spends his life navigating an opaque bureaucracy without knowing his crime, we risk spending our lives responding to systems we barely understand.

Will we one day be judged by how we used our attention?

Perhaps the antidote is awareness — of self, society, and the digital infrastructures mediating both. As one student summarized:

“It made us think about many ideas we hadn’t before. Slowing down might be one of the most radical things we can do.”

Related Resources

- “Personal Data and Society” from the Measure of Everyday Life episode with Jose Marichal, author of You Must Become and Algorithmic Problem: Renegotiating the Socio-Technical Contract.

- The Trial by Franz Kafka- Full book summary from Sparknotes

- Mindful Attentionist – Mitra Manesh, UCLA Center for Mindfulness Studies

- The Instagram Politician: Zohran Mamdani’s Rise And What It Reveals About American Politics

- Neurodiversity and the Myth of Normal (CBC Ideas podcast)